Claude vs Gemini: Which AI Is Better for Executives?

Claude vs Gemini — an honest comparison. Claude wins on writing and strategic reasoning. Gemini wins if your team runs on Google Workspace. Here's how to decide.

The right answer here depends almost entirely on one question: does your organization run on Google Workspace? If yes, that changes the comparison significantly.

Model and pricing data as of April 17, 2026. Verify at claude.ai/pricing and gemini.google/subscriptions before making a purchase decision.

| Claude | Gemini | |

|---|---|---|

| Vendor | Anthropic | |

| Current flagship | Claude Opus 4.7 (Apr 2026) | Gemini 3 Pro / 3.1 Pro |

| Free tier | Claude Sonnet, limited usage | Gemini 3 Flash, limited 3.1 Pro access |

| Individual (standard) | Pro: $20/month | Google AI Pro: $19.99/month |

| Individual (premium) | Max 5x: $100/month · Max 20x: $200/month | Google AI Ultra: $249.99/month |

| Team / business | Team Standard: $20–25/seat · Team Premium: $100/seat | Workspace + Gemini add-on: varies |

| Enterprise | Custom — SSO, audit logs | Workspace Enterprise + Gemini |

| Context window | 1M tokens (auto on Max/Team/Enterprise; opt-in on Pro) | 1M (AI Pro, Gemini 2.5 Pro) · 2M (Gemini 3.1 Pro) |

| G2 rating | 4.7 / 5 | 4.4 / 5 |

Quick Verdict

Our Verdict

Winner: Claude — unless your org runs on Google Workspace

For executive thinking work — strategy, decision analysis, difficult communication, board prep — Claude is the better tool, and not by a small margin. Gemini's genuine advantage is integration: AI embedded inside Gmail, Docs, Sheets, and Meet. That's an infrastructure decision, not a thinking tool decision. If you only need one answer: start with Claude. Add Gemini if your org is on Google Workspace and you want AI threaded through your daily email and documents.

- Executive thinking partner (default) → Claude

- Org on Google Workspace, want embedded AI → Gemini

- Multimodal analysis (images, audio, video) → Gemini

- Strategic writing that sounds like you → Claude

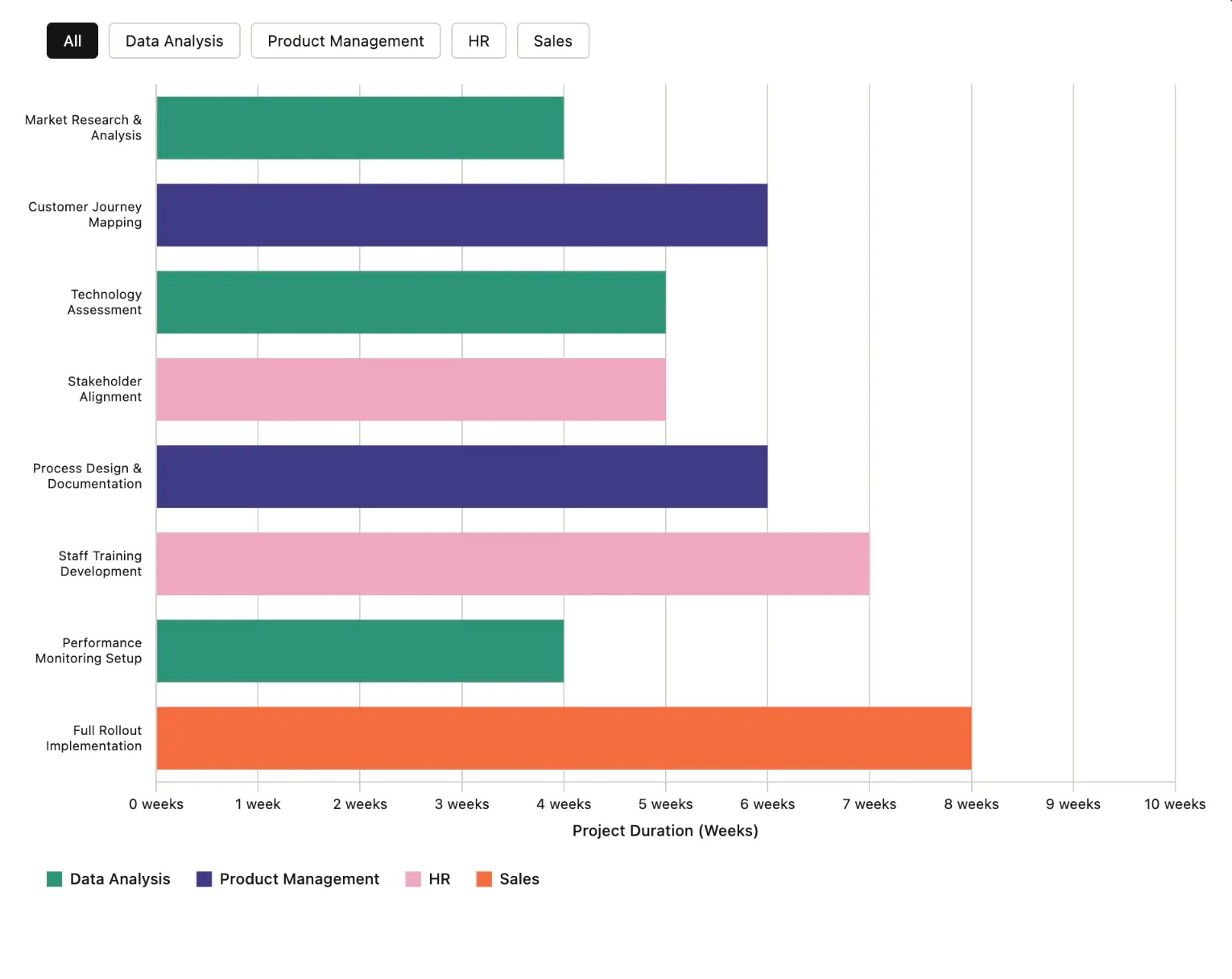

Feature Scores

Scores reflect practical output quality in real executive workflows — strategy, communication, and decision-making — not spec differences. A tool that produces generic output scores lower than one that produces output you'd actually use. Scores based on testing as of April 2026.

| Feature | Claude | Gemini |

|---|---|---|

| Strategic Analysis Depth | 9 | 7 |

| Writing Quality & Voice Calibration | 9 | 6 |

| Google Workspace Integration | 3 | 10 |

| Multimodal (Image / Audio / Video) | 7 | 9 |

| Real-Time Web Search | 7 | 9 |

| Context Window (Practical Quality) | 9 | 7 |

| Team Deployment (Google Orgs) | 5 | 9 |

| Extended / Chain-of-Thought Reasoning | 9 | 7 |

Detailed Breakdown

Strategic Analysis Depth

This is where the gap is largest, and where it matters most for executive work.

Claude handles genuinely complex, politically loaded situations that don't resolve cleanly. You can describe a VP who's underperforming but politically protected, paste in six months of board feedback, and ask "what's the real problem here and what are my options?" — and get analysis that holds the tension rather than compressing it into a tidy 3-point framework. Claude treats ambiguity as information, not as something to be resolved away.

Gemini handles strategic questions competently. The output is well-organised and arrives fast. But the register skews toward structure over judgment — it tends to produce a framework for thinking about your question rather than an actual perspective on it. Ask Gemini a hard org question and you'll get five considerations. Ask Claude the same question and you'll get a read.

Score rationale: On Vellum's LLM Leaderboard (April 2026), Claude Opus 4.7 scores 94.2% on GPQA Diamond and 87.6% on SWE-bench Verified. Gemini 3 Pro sits at 91.9% on GPQA Diamond on the same leaderboard. Both are strong on standardised reasoning. The strategic analysis advantage is qualitative — it's about style and output register for ambiguous business problems, not a decisive gap on benchmarks.

Writing Quality & Voice Calibration

Both tools produce professional prose. The gap is in default register and voice matching.

Claude's default output is less formulaic. It doesn't open every email with a pleasantry, doesn't close every paragraph with a summary sentence, and adjusts more naturally to the register of what you give it. When you run the voice calibration exercise — paste three writing samples, ask for a voice profile, apply that profile to every future draft — Claude's outputs genuinely start sounding like you rather than like a corporate writing assistant. For a deeper look at how this works in practice, see the 10-minute executive meeting prep workflow.

The practical test: write a board-level narrative with Claude versus Gemini on the same brief. Claude produces something that could credibly carry your name. Gemini produces something that's correct, clean, and slightly generic — the kind of prose that reads as AI-assisted to anyone paying attention.

For executive communication where the writing needs to carry your authority, that's not a small distinction. Your board can tell.

Score rationale: For executives who want AI drafts they can send with minimal editing, Claude's voice calibration is a practical day-to-day advantage, not just a benchmark difference.

Google Workspace Integration

As of April 2026: Gemini's Workspace integration covers Gmail, Docs, Sheets, Slides, Meet, and Drive. Claude has no comparable native integration. Verify feature availability with your Google Workspace admin.

Gemini wins this category decisively. It's embedded natively in Gmail, Google Docs, Google Sheets, Google Slides, Google Meet, and Google Drive. In Gmail, it drafts reply emails, summarises threads, and suggests follow-ups inside the compose window. In Docs, it drafts, edits, and summarises without leaving the document. In Sheets, it builds formulas and analyses data from natural language prompts. In Meet, it generates meeting summaries post-call and saves them to Drive automatically.

For an executive whose day runs through Gmail and Google Docs, this is AI inside the workflow rather than alongside it. The context switch to a separate Claude window — copying text, pasting prompts, copying output back — adds friction that Gemini eliminates when you're in the Google ecosystem.

Claude has a web interface and API. It does not have native integration with Google Workspace at this depth. This is not a gap that plugins or workarounds fully close.

Score rationale: If your team is on Google Workspace, Gemini's integration alone is worth serious consideration. For teams not on Google Workspace, this advantage disappears entirely.

Multimodal (Image / Audio / Video)

As of April 2026: Claude handles images natively but does not process audio or video files. Gemini supports image, audio, and video natively.

Gemini was built multimodal from the ground up. It analyses images, audio recordings, and video files natively — including long video. An executive can upload a recorded presentation, a competitor's product demo, or a meeting recording and ask Gemini to summarise the key points, identify commitments, or flag inconsistencies.

Claude handles images well — paste a chart, a whiteboard photo, or a document scan and Claude analyses it competently. But Claude does not currently process audio or video files natively. For executives whose work involves reviewing recorded content, Gemini's range is the broader tool.

Score rationale: For text and image work, both tools are capable. For audio and video analysis, Gemini is the only option between the two.

Real-Time Web Search

As of April 2026: both tools include web search. Gemini uses Google Search grounding. Claude's search is available on paid plans. Capabilities in this area are evolving quickly.

Gemini's web search is tighter because it's powered by Google Search. For executives who need current market data, recent regulatory announcements, or competitor news, Gemini's search grounding is more seamless — results feed directly into the response. Claude has web search and it's improved significantly, but Gemini holds a practical edge on recency and depth.

Score rationale: For time-sensitive research, Gemini's Google-powered search is a practical advantage. For strategic analysis that doesn't require current information, this distinction rarely affects output quality.

Context Window (Practical Quality)

As of April 2026: Claude Opus 4.6+ supports up to 1M tokens — automatic on Max, Team, and Enterprise plans; Pro subscribers can opt in via Claude Code's /extra-usage command rather than getting it by default. Gemini 3.1 Pro (released February 2026) shipped with a 2M token context window available in the consumer app to Google AI Ultra subscribers; Gemini 2.5 Pro (1M tokens) is available on Google AI Pro. Verify current limits at your plan level before relying on large-context features.

Gemini now holds the larger consumer-available context window at the premium tier. Gemini 3.1 Pro on Google AI Ultra exposes 2M tokens directly in the app — roughly 1.5 million words, enough to process multi-year email archives, full legal discovery sets, or entire corporate document libraries in a single session. Claude's 1M on Max and Team is still substantial, but the spec-sheet advantage on raw capacity belongs to Gemini.

The comparison that matters now is quality, not size. Context window size and context window quality are different things. Claude's window is highly reliable — it maintains instructions, constraints, and earlier context consistently through long sessions without degrading. Gemini's larger window is technically impressive; in our testing, reasoning quality under pressure on complex multi-document analysis tends to hold up more consistently with Claude, though this is qualitative rather than benchmark-proven.

For most executive use cases — long documents, complex briefs, multi-document analysis — both tools at their paid tier are more than sufficient. The edge cases where size alone matters favour Gemini 3.1 Pro at 2M. Where consistent reasoning across a long session matters more than raw capacity, Claude at 1M still earns the nod.

Score rationale: Gemini leads on raw capacity at the premium tier (2M vs 1M). Claude leads on reasoning consistency at the edges of a long session. Score reflects the quality trade-off, not size.

Extended / Chain-of-Thought Reasoning

As of April 2026: Claude Opus 4.7 includes extended reasoning. Gemini 2.5 Pro and the Gemini 3 series include "thinking" modes. Both available on paid plans.

Both tools have extended reasoning modes that break down complex problems step by step. Claude's extended reasoning produces output that is more auditable — the reasoning steps are clearer, the qualifications are more precise, and the output is less likely to state confident conclusions where the evidence is ambiguous. For executives who need to understand the reasoning behind an AI-assisted analysis, not just the answer, that auditability matters.

Gemini's thinking mode is capable and improving rapidly. For nuanced judgment calls with significant organisational stakes, Claude's reasoning is more reliably conservative in ways that protect against confident errors.

Score rationale: If you want answers, use Gemini. If you want judgment, use Claude. Extended reasoning is where that difference is most visible.

Where Each Tool Breaks

Failure modes as of April 2026, based on current model behaviour and published benchmarks. Both tools are updated frequently — patterns may shift across model versions. Qualitative observations are marked as such.

Where Claude breaks:

- Not embedded in your existing apps. Claude's integration gap versus Gemini is real — it doesn't live inside Gmail, Google Docs, or Sheets natively. That said, Claude Projects significantly reduce the friction argument: you can maintain persistent context, upload reference files, and set standing instructions that carry across every conversation without re-explaining your role or context each time. The gap is genuine; it's just less severe than "context switch every time" if you set up Projects properly.

- Built for depth, not throughput. Claude is not optimised for generating large volumes of similar outputs fast (40 email variants, 30 product descriptions, bulk data extraction). For high-volume repetitive generation, purpose-built tools outperform it. This is a deliberate design trade-off, not a bug.

- Prompt quality matters more on lighter models. (Qualitative observation, based on testing — model-tier dependent.) On Sonnet and Haiku, Claude rewards well-structured prompts and returns weaker results from disorganised input. On Opus, this largely disappears — Opus is specifically built to handle ambiguous, messy, and poorly structured input and extract what matters from it. If you're on the Pro or Max plan using Opus, this failure mode doesn't apply the same way.

Where Gemini breaks:

- Structure over judgment on hard questions. (Qualitative observation — benchmarks show both tools are strong on reasoning.) On Vellum's LLM Leaderboard (April 2026), Claude Opus 4.7 scores 94.2% on GPQA Diamond and Gemini 3 Pro scores 91.9% — close, with Claude holding a small edge. The difference readers will actually feel isn't raw reasoning power. It's style: Gemini defaults to organising your question into a framework. Claude is more likely to give you a perspective on it. For strategic and political business problems — the kind that don't have a textbook answer — that style difference is the practical gap.

- Hallucination risk is task-dependent — and the open-domain numbers are concerning. This is the most important accuracy nuance. On grounded summarization tasks (where the model works from a provided document), Gemini 2.0 Flash recorded a ~0.7% hallucination rate — best in class at that time. But on the AA-Omniscience open-domain benchmark, when Gemini 3 Pro gets a question wrong, it fabricates a confident answer in roughly 88% of those incorrect attempts — it almost never admits it doesn't know. Google DeepMind's own FACTS benchmark suite, meanwhile, shows top models scoring around 68.8/100 on factuality — meaning even the best are wrong about 30% of the time across the measured dimensions. The practical lesson: Gemini's search grounding is genuinely strong when it's actually grounding. When it's not, verify everything. (Sources: Vectara hallucination leaderboard, DeepMind FACTS Benchmark Suite, AA-Omniscience via The Decoder.)

- Integration advantage evaporates outside Google. Gemini's core differentiator is Google Workspace. Move to Microsoft 365, Slack, Notion, or any non-Google stack and the integration advantage disappears entirely. What remains is a capable general-purpose AI — without the embedded workflow argument that justifies choosing it over Claude.

- Generic outputs at the ceiling. (Qualitative observation, based on testing.) Gemini's writing is clean and correct. The gap that opens when you compare calibrated Claude output to Gemini output on the same brief — especially for executive communication — is a consistency issue, not an occasional one. Claude produces drafts closer to send-ready for high-register writing.

Pricing Comparison

Pricing as of April 17, 2026. Both vendors update plans frequently — verify at claude.ai/pricing and gemini.google/subscriptions before committing.

| Plan | Claude | Gemini |

|---|---|---|

| Free | Claude Sonnet, limited usage | Gemini 3 Flash, limited 3.1 Pro access |

| Individual (standard) | Pro: $20/month | Google AI Pro: $19.99/month |

| Individual (premium) | Max 5x: $100/month · Max 20x: $200/month | Google AI Ultra: $249.99/month |

| Team / business | Team Standard: $20–25/seat · Team Premium: $100/seat | Workspace + Gemini add-on: varies |

| Enterprise | Custom — SSO, audit logs | Workspace Enterprise + Gemini |

Claude's premium tier works differently from Google's. Pro ($20/month) gives you access to all models including Opus 4.7 with standard usage limits. Max adds two tiers above that: Max 5x ($100/month) gives you 5× Pro's usage capacity, and Max 20x ($200/month) gives you 20× — both with priority access during peak demand and full Claude Code access. If you're a power user hitting Pro limits regularly, Max 5x is typically the right step up before committing to Max 20x.

Google's consumer subscription branding changed in 2026 — the plan formerly called "Google One AI Premium" is now Google AI Pro ($19.99/month). The premium tier is Google AI Ultra ($249.99/month), which unlocks Gemini 3.1 Pro and higher usage limits. If you were on the old Google One AI Premium plan, verify whether you've been migrated and at what price.

The team-level comparison still depends heavily on your existing Google Workspace relationship. If you're already on Business Plus or Enterprise, Gemini features may be partially included. Check your Google Workspace billing directly — the add-on economics vary significantly by org size and plan tier.

For organisations not on Google Workspace, Claude's Team plan is the simpler calculation — one subscription, no platform dependency. Team Standard runs $20–25/seat/month (annual vs monthly billing); Team Premium is $100/seat/month.

Best For (Use-Case Verdict)

| Use case | Pick | Why |

|---|---|---|

| Strategy & planning (standalone) | Claude | Better reasoning depth, less formulaic, holds nuance |

| Email & document writing | Claude | Voice calibration, lower AI-generic register |

| Google Docs / Gmail drafting | Gemini | Native embedded integration — no context switch |

| Video and audio analysis | Gemini | Claude doesn't process video or audio natively |

| Real-time market/news research | Gemini | Google Search grounding is faster and more current |

| Meeting prep (no Workspace) | Claude | Better stakeholder analysis and prompt-driven prep |

| Meeting summaries (Google Meet) | Gemini | Auto-generated summaries inside Meet natively |

| Long-document analysis (quality) | Claude | More reliable reasoning at the edges of a long session |

| Extreme volume context (2M tokens) | Gemini | Gemini 3.1 Pro on AI Ultra exposes 2M in-app; Claude maxes at 1M |

| Team rollout (Google org) | Gemini | Familiarity + embedded integration reduces adoption friction |

| Team rollout (non-Google org) | Claude | Simpler pricing, better standalone output quality |

Bottom line: Start with Claude. It's the better tool for the work that defines executive performance — strategy, communication, decisions, board prep. Add Gemini if your org is on Google Workspace and you want AI embedded in the daily flow of email and docs. That's a valid reason to add it. It's not a reason to replace Claude with it.

The Hidden Variable: Your Organisation's Stack

Most executives comparing Claude and Gemini are really answering a simpler question: which productivity ecosystem am I already in?

If your org is Google Workspace, the embedded integration is a real advantage — not marketing. AI that writes inside your email, edits inside your doc, and summarises your meeting before the call ends is meaningfully different from AI you switch to in a separate tab. That's Gemini's genuine argument, and it's worth taking seriously.

But here's what that argument doesn't change: Gemini's integration advantage is about where AI lives in your workflow. It's not about what the AI can do when the stakes are high. For the decisions that define your quarter — the hard personnel call, the board narrative that needs to hold up under scrutiny, the strategy memo your CEO will push back on — the integration advantage is irrelevant. What matters is output quality. And on output quality for executive work, Claude leads.

If your org is Microsoft 365, Gemini's integration advantage doesn't apply at all. The right comparison there is Claude vs Microsoft Copilot.

The Monday morning default: Open Claude. Use it for everything that requires actual thinking. If your org is on Google Workspace and you find yourself constantly switching tabs to draft emails or edit docs, add Gemini for that specific friction. Don't let the integration tail wag the thinking dog.

For how Claude fits into a complete executive AI system, see The Best AI Tools for Executives in 2026. For a 30-day plan to build the full system, see The 30-Day AI Build Plan.

Get 100 prompts built for how executives actually work.

The Executive AI Toolkit covers strategic thinking, communication, people decisions, meeting prep, and more — across whichever tool you choose to use.

$67. One purchase. No subscription.

Get the Executive AI Toolkit — $67Related comparisons

- Claude vs ChatGPT: Which AI for Executive Work?

- Microsoft Copilot vs ChatGPT for Business

- Claude vs Microsoft Copilot for Executives

Benchmark Sources

Factual claims in this comparison are based on the following publicly available sources, verified April 2026:

- GPQA Diamond (graduate-level reasoning): Claude Opus 4.7 = 94.2%; Gemini 3 Pro = 91.9% — Vellum LLM Leaderboard / The Next Web

- SWE-bench Verified (real-world software engineering tasks): Claude Opus 4.7 = 87.6% — Vellum LLM Leaderboard / The Next Web. A directly comparable Gemini 3.1 Pro SWE-bench Verified figure was not cleanly published in the same harness as of this review; we've omitted a side-by-side rather than quote a figure we can't source.

- Hallucination on grounded summarization: Gemini 2.0 Flash ~0.7% — Vectara benchmark via AI Hallucination Report

- AA-Omniscience (open-domain fabrication): Gemini 3 Pro produces a confident false answer in ~88% of its incorrect attempts, rarely admitting uncertainty — The Decoder

- FACTS Benchmark Suite (Google DeepMind): Top models score around 68.8/100 on factuality — wrong roughly 30% of the time across the measured dimensions — DeepMind research blog

Qualitative observations (writing register, strategic analysis style, prompt sensitivity) are based on editorial testing and are labelled as such throughout this page.

Free guide + weekly newsletter

Get Started with AI in One Day — Free

Subscribe and get our free 15-page starter guide instantly. Then weekly AI workflows, honest tool takes, and strategies for senior professionals. No fluff. Unsubscribe any time.