AI for D&I Initiatives: From Statements to Measurable Action (2026)

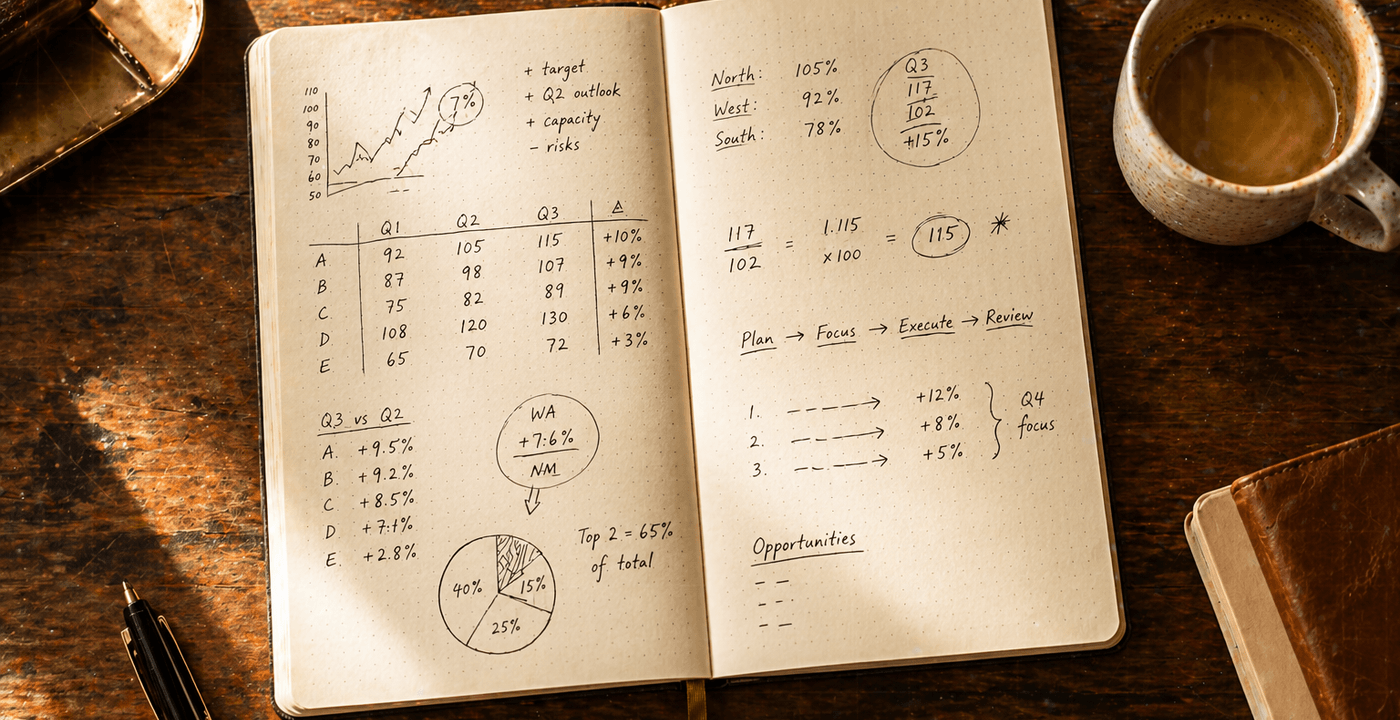

A 5-step AI workflow for senior leaders: audit the real gap with data you already have, set measurable commitments with owners, design programmes against validated root causes, and build a reporting cadence with escalation teeth.

This article covers using AI to design, measure, and communicate diversity and inclusion initiatives for senior leaders who own the accountability — CEOs, HR leaders, and business unit heads. It focuses on turning commitments into programmes with measurable outcomes. It does not cover D&I strategy from scratch, compliance-specific programmes, or HR specialist tooling. Prompts work with Claude, ChatGPT, Copilot, or Gemini.

Most D&I initiatives stall not from lack of commitment but from lack of measurement. Programmes get launched, statements get published, and twelve months later no one can articulate what changed — because no one defined what change would look like in the first place.

Measurement is the diagnostic, not the cure. It is necessary — and not sufficient. D&I work also fails when leadership support is shallow, when managers don't change behaviour, when employees don't trust that this time is different from the last initiative, and when the person accountable for outcomes quietly deprioritises them when other pressures arrive. This article addresses the measurement problem because that's where most well-intentioned programmes fail on the design side. But a well-designed programme in a culture that resists it will still fail. Know which problem you're solving.

AI doesn't fix the underlying organisational conditions that produce inequity. But it can help you move from vague intentions to specific programmes with measurable targets and accountable owners — which is where most initiatives fail on the execution side. D&I becomes real when someone's operating rhythm changes, not when the statement gets better.

D&I becomes real when someone's operating rhythm changes, not when the statement gets better.

Step 1: Audit the Current State With Data You Already Have

Before designing any initiative, you need to understand the actual gap — not the assumed one. Most organisations have more relevant data than they use: headcount by level and demographic, promotion rates, pay band distributions, engagement survey results, attrition by group. The problem is that no one has assembled it into a coherent picture.

Paste this prompt:

"I want to build a factual baseline for our diversity and inclusion work before designing any new initiatives. Help me identify what data I should gather and what it would tell me.

Our organisation: [describe: size, industry, structure — e.g., "250-person technology company, three business units, engineering-heavy"]

For each of the following data sources, tell me:

1. What questions this data can answer about representation and equity

2. What gaps it can't answer — and what I'd need to gather separately

3. One specific metric I should calculate from this data that would tell me where the biggest gap is

Data I currently have access to: [list what's available — e.g., headcount by level and gender, promotion rates by year, pay band data, engagement survey scores, attrition rates]"

This prompt produces a data map — what you have, what it tells you, what it doesn't. The goal is to identify the one or two metrics that most clearly reveal where the gap is largest. Designing programmes before you've done this means you're solving the assumed problem, not the actual one.

Worked example: A COO at a 300-person professional services firm runs this prompt with access to headcount data (by level and gender), promotion rates from the past three years, and annual engagement survey results. The output identifies that the firm's representation gap isn't at the entry level — where hiring is already balanced — but at the manager-to-director transition, where women's promotion rates are 40% lower than men's — the type of transition-point gap McKinsey's Women in the Workplace research identifies as the primary driver of senior underrepresentation, distinct from pipeline supply.

The COO's first instinct is to fix the pipeline — increase the number of women applying for director roles. The data doesn't support that. The applicant pool is proportionate. The gap is in outcomes, not inputs. The most likely explanation isn't supply; it's something happening in how promotion decisions are made — who gets sponsor investment (sponsors actively advocate for their candidate's promotion, where mentors advise), how "readiness" is assessed, whether criteria are applied consistently. The initiative that follows targets structured promotion criteria and sponsor accountability, not recruitment. That reframe only happened because the data was assembled before the solution was designed. Without it, the organisation would have invested in a pipeline programme that addressed the wrong problem.

Step 2: Set Measurable Commitments, Not Aspirational Targets

"We are committed to building a more diverse and inclusive organisation" is not a commitment. It's a statement. A commitment has a specific outcome, a timeframe, and an owner.

The failure mode is aspirational framing: "increase representation of X group at senior levels" with no baseline, no timeframe, and no definition of what "senior levels" means. Aspirational targets are impossible to hold anyone accountable to because no one can agree on whether they were met.

Paste this prompt:

"Based on the data I have [paste key findings from Step 1], help me turn our D&I intentions into specific, measurable commitments.

For each commitment:

1. State it as a measurable outcome with a timeframe: "[Metric] will reach [target] by [date]"

2. Name the baseline — what is the current number?

3. Identify who is accountable (role, not name)

4. Name one leading indicator we can track quarterly to know if we're on track before the annual review

Our current priorities: [describe — e.g., "improve representation at the director level, reduce the promotion rate gap between demographic groups, improve belonging scores in the engineering team"]

Flag if any of my stated priorities are too vague to be measured as written — and suggest how to make them specific."

The leading indicator instruction is important. Annual targets measured annually produce a single point of data per year. You can't course-correct. A leading indicator tracked quarterly gives you three chances to adjust before you miss the annual target.

One warning on measurement: not all measurable things are equally meaningful. The most common D&I measurement trap is tracking activity instead of outcomes — counting training sessions delivered instead of whether behaviour changed, tracking representation at hiring instead of representation at advancement, reporting on programme participation instead of asking whether the gap closed. Vanity metrics create the appearance of progress while the underlying problem continues. When choosing what to measure, ask: if this number improves, does the actual gap close? If the answer is "not necessarily," you're tracking the wrong thing.

Worked example: A CPO at a 180-person software company uses this prompt with the promotion-gap finding from Step 1. Her initial priority statement: "improve representation of women at director level." The prompt flags it as unmeasurable — no baseline, no timeframe, "director level" undefined. The revised commitment: Women's promotion rate from manager to director will reach 85% of men's rate by Q4 2027, from a current baseline of 62%. Owner: CPO. Leading indicator: percentage of women manager-to-director candidates with an active internal sponsor, tracked quarterly. That reframe also names the mechanism — sponsorship — which shapes what the programme in Step 3 needs to target.

A leading indicator tracked quarterly gives you three chances to adjust before you miss the annual target.

Step 3: Generate Programme Hypotheses, Then Validate

This is where most organisations make the same mistake: they deploy generic programmes (unconscious bias training, mentorship programmes, ERG funding) without connecting them to the specific gap they're supposed to close. The result is programmes that produce activity but not movement on the metric. (ERG funding, for instance, builds genuine community and belonging — but it rarely moves a promotion-rate or pay-gap metric on its own without structural changes to how decisions are made.)

Paste this prompt:

"We've identified the following specific gap: [describe — e.g., "women are promoted from manager to director at a 40% lower rate than men over a three-year period"]

Help me design an initiative that directly addresses this gap. I want:

1. The most likely root causes of this specific gap — what would the research suggest? (Not general D&I theory — causes specific to this type of gap at this transition point)

2. 3 programme options that address the most likely root cause — each with an estimated cost-to-implement range (low / medium / high) and expected timeline to see impact

3. For each option: what would success look like in 12 months? What does the leading indicator look like if it's working?

4. Which option would you recommend as a starting point, and why?"

The "most likely root cause" step separates this from generic programme design. A promotion rate gap at a specific career transition has different causes than a representation gap at hiring. Sponsorship programmes address different root causes than structured promotion criteria. Getting the root cause right first means the programme has a chance of moving the metric.

One important caution: the root causes AI generates here are hypotheses, not findings. They are informed by research patterns and your input data — but they are not a substitute for organisational diagnosis. Before committing to a programme design, validate the hypotheses with real input: manager interviews, employee listening sessions, review of how promotion decisions have actually been documented. AI can surface the most plausible explanations. Only people inside the organisation can confirm which ones are real here. Running a programme against a plausible-but-wrong root cause produces activity and no movement on the metric — exactly the failure mode this article is trying to prevent. The same gap-to-design discipline shows up in AI for performance reviews, where generic feedback masks the specific behaviour gaps you can actually act on.

Step 4: Draft Communications That Are Specific, Not Generic

D&I communications fail in a predictable way: they're too broad to be credible and too corporate to be trusted. "We believe in the importance of diversity" lands as noise to employees who've heard it before. Communications that build trust name the specific problem, state the specific commitment, and acknowledge what hasn't worked in the past.

Paste this prompt:

"I need to communicate our D&I commitments to [audience: e.g., all staff / leadership team / board]. Draft a [format: e.g., all-staff email / town hall talking points / board update] that:

1. Names the specific gap we're addressing (avoid generic language — use the actual data)

2. States the specific commitment we're making (outcome, timeframe, owner)

3. Acknowledges honestly what we've tried before and what we're doing differently this time — or, if this is a first initiative, why now

4. Explains what employees can expect to see change, and by when

5. Names how progress will be reported and to whom

Tone: direct, specific, no corporate clichés. Avoid phrases like "on this journey" or "we believe in the power of diversity." If any part of the draft defaults to vague language, flag it and suggest specific language instead.

Context: [paste your commitments from Step 2 and the initiative design from Step 3]"

The flag-vague-language instruction does real work. Left to default patterns, AI will produce exactly the kind of corporate boilerplate you're trying to avoid. Asking it to flag its own defaults produces draft-plus-critique in one pass. The same disciplined editing technique applies to almost any executive message — the executive communication workflow goes deeper on the pattern.

One thing the communication prompt can't do: manufacture credibility. Specific language without visible follow-through can actually reduce trust — employees who've heard specific commitments before and watched them quietly disappear are harder to persuade the second time. Measurement shared without accountability can backfire: publishing the gap and not closing it makes the problem more visible, not less. Specific and direct communication is necessary, but it only builds trust if the actions follow. The communication is the last step in programme design, not the first. If the commitment, the programme, and the accountability structure aren't solid, the communication makes the gap in those things more visible, not the progress.

Reporting with an escalation trigger has teeth. Reporting without one is documentation.

Step 5: Build the Reporting Cadence

A commitment without a reporting structure is a stated intention. Reporting quarterly against specific metrics — to the leadership team and, where appropriate, to the board — is what converts a programme from a project into accountability.

Paste this prompt:

"Help me design a simple reporting structure for our D&I commitments. I need:

1. A quarterly update template: what to report, to whom, in what format (1 page maximum)

2. The 3–5 metrics that should appear in every quarterly report — leading indicators, not just annual outcomes

3. A trigger for escalation: at what point does a lagging indicator require an active response rather than continued monitoring?

4. An annual review structure: what questions should the leadership team ask annually to assess whether the programme is producing real change?

Our commitments: [paste from Step 2]

Our programme: [paste from Step 3]"

The escalation trigger is the piece most reporting structures omit. Without one, a declining metric gets discussed but not acted on. With one — "if the leading indicator is below X for two consecutive quarters, we review the programme design, not just the metric" — the reporting structure has teeth.

Worked example: The CPO from Step 2 runs this prompt with her sponsorship-rate commitment and sponsor-assignment programme. The quarterly report is one page: current sponsorship rate (the leading indicator), director-level promotion rate year-to-date, and one paragraph explaining what changed and why. Her escalation trigger: if the sponsorship rate is below 70% for two consecutive quarters, the CPO reviews the programme design with the HR lead — not just monitors the number. That trigger exists because the first year showed the rate dips in Q3 due to mid-year leadership transitions, without indicating a structural problem. The trigger is what distinguishes a cadence that generates insight from one that generates anxiety without action.

What you have after these five steps

A data-backed baseline that names the actual gap. A commitment with a specific metric, timeframe, and owner. A programme designed against a validated root-cause hypothesis. Communications that cite your own data instead of generic language. A reporting cadence with escalation teeth. None of this requires a D&I specialist. It requires a leader who treats the gap as a metric and applies the same accountability they apply to revenue or headcount. AI builds the structure fast. Making it real is yours to do.

Where This Breaks Down

The data doesn't exist or can't be gathered. In some organisations, demographic data isn't collected or isn't available at the level of detail needed for this analysis. If you genuinely can't measure the gap, you can't design a programme to close it. The first initiative in that case is data collection — not programme design. Building a commitment around a metric you can't currently measure is better than building one around a metric you're guessing at. Be aware that purely quantitative data often reveals what the gap is without revealing why it exists. Some gaps only become legible when you combine operational data with qualitative input — employee interviews, listening sessions, manager conversations. Don't treat the absence of qualitative evidence as confirmation that the quantitative explanation is complete.

Employees don't trust the initiative. A well-designed programme can still fail because the people it's meant to serve don't believe leadership intends to follow through. This is especially likely when there has been a previous initiative that produced a statement and no visible change. Employee trust in D&I work is not given — it is earned through consistency over time, and it is lost faster than it is built. If the organisation has a trust deficit on this topic, programme quality alone won't close it. The programme needs to be visible, the reporting needs to be honest, and leaders need to be seen behaving differently — not just communicating differently. Mapping the stakeholder layer before announcing anything is often the difference between a programme that lands and one that gets dismissed before it launches.

Leadership commitment is performative, not operational. If the executive who owns this initiative changes priorities when it becomes inconvenient, the programme will stall regardless of its design quality. The most important variable in D&I programme success is whether the person accountable treats it as a business metric with the same consequence as a financial one — with the same scrutiny, the same escalation triggers, the same willingness to ask hard questions when progress stalls. AI can build the structure; it can't manufacture the will.

The programme is well-designed but the culture won't sustain it. A sponsorship programme in a culture that doesn't recognise or reward sponsors is an annual event that produces no promotions. A structured promotion rubric in a culture where decisions are made before the process starts is documentation for decisions already made elsewhere. Programme design and culture change are sequential, not simultaneous. Know which one you need first — and be honest about which one you have the appetite and authority to do.

The Toolkit That Goes Deeper

Go deeper with the Executive AI Toolkit.

The full People & Performance section of the Prompt Library — 15 prompts for team leadership scenarios including talent development, performance management, and stakeholder communication. The People workflows in Component 1 show how to build accountability structures that survive leadership changes.

$67. One purchase. No subscription.

Get the Executive AI Toolkit — $67D&I work doesn't fail because organisations don't care. It fails because intentions never get converted into measurable programmes with accountable owners. AI doesn't fix the deeper conditions — but it makes sure the structure isn't the reason the gap stays open.

AI workflows for executives, once a week. No filler. The Zintellex newsletter — subscribe below.

Free guide + weekly newsletter

Get Started with AI in One Day — Free

Subscribe and get our free 15-page starter guide instantly. Then weekly AI workflows, honest tool takes, and strategies for senior professionals. No fluff. Unsubscribe any time.

Keep reading